17/3/2023

South by Southwest (SXSW) is back with a regular edition in 2023, all in-person in Austin, Texas, after a cancellation, an online and a hybrid edition due to the covid-19 pandemic.

With a record number of Brazilians, the event has the inglorious mission of talking about innovation and anticipating trends in a world that changes rapidly, all the time. Is it still possible?

From here in Brazil, I tried to follow the news from there to understand what took so many fellow Brazilians to the land of Uncle Sam. I had a strange “déjà vu” with Gowalla being reintroduced in the same place, 14 years later; I read the repercussion of the anticipated, albeit protocol talks by C-levels from companies such as Patagonia and OpenAI; and I felt the repercussion of the always acclaimed predictions of futurist Amy Webb.

This time around, Amy called attention to generative artificial intelligences, such as ChatGPT, a trend that already reached the public.

In 2022, web3, cryptocurrencies, and metaverse set the tone for the hybrid edition of SXSW. Mark Zuckerberg made an appearance to announce NFTs on Instagram and Vice called the event an effort to create a “pathetic tech future” propelled by marketing.

The effort, as anyone can note, was thwarted. This week Meta buried NFTs, and terms like web3, metaverse and other underlying nonsense were run over by OpenAI’s chatbot, a technology that distinguishes itself from previous trends by trivial details such as being somewhat useful.

What trends are being talked about at SXSW? And for whom?

Outside, not far from that bubble of optimism filled with “futurists”, market speakers and brand “activations”, the world that provides the kind of innovation that has a guaranteed stage at SXSW kind of imploded, with the bankruptcy of the Silicon Valley Bank and the announcement of another mass layoff at Meta.

The fact that the most intense coverage of SXSW, at least here in Brazil, has appeared in outlets specialized in advertising are symptoms of a problem that has not been lingering for a long time, and became clear in this year’s return to normality: much more than “technology, music, and movies”, SXSW is an event for advertising, or for advertisers.

SXSW seems to have become an excuse for advertising executives to go to the US, blow off some steam and come back with extravagant ideas in their luggage. Ideas that, in many cases, don’t even make sense in Global South. (This may be news to some, but Brazil is located in the Global South.)

An executive from Itaú bank, the first Brazilian master sponsor of SXSW, justified the investment with the cliché that one must be tuned into the future. “Everything is so fast-paced, and technology has advanced so much, that it is no longer enough to look at the now.” Where were these people last week?

The discomfort and incomprehension are not only mine. People there felt it too. From here, Aori Sauthon perhaps summed up the feeling better than anyone else: “Folks look for trends in Texas, but they’ve never been to Madureira [a Rio de Janeiro neighborhood].”

Discuss @ Hacker News.

8/3/2023

On Monday (6), Meta reached an agreement with the European Union (EU) about WhatsApp’s privacy policy.

The mess began in January 2021, when WhatsApp updated its privacy policy to open a loophole on its end-to-end encryption in conversations between individual and business users.

In Europe, Meta pledged to allow WhatsApp users to decline the new policy (and future updates) without being harassed “ad infinitum” by popups. (To this day, more than two years later, I have to dismiss the popup asking me to accept that privacy policy. Every. Single. Day.)

Meta has also assured the EU that it does not share personal data of European citizens who use WhatsApp with outside companies nor with its own (Facebook and Instagram) for advertising purposes.

All very nice, albeit overdue, but what about the rest of the world?

I sent two questions to WhatsApp in Brazil:

- Will the terms of the agreement [with the EU], such as requiring WhatsApp after changing their privacy policy to show a “no” button to the user and stop showing the popup asking for acceptance, apply to other regions of the world, specifically Brazil?

- Is the statement that “personal data [from WhatsApp] is not shared with third parties or other Meta companies, including Facebook, for advertising purposes” valid for Brazil?

To the first, WhatsApp’s spokesperson responded that they will not comment.

To the second, a binary question, the kind you answer with “yes” or “no”, they sent a link to the WhatsApp documentation. When I insisted for a direct answer, I got back two more links.

I have read the documentation contained in all three links — which together add up to ~5,800 words, which took half an hour to read — and based on them I can conclude that outside Europe Meta uses WhatsApp user data for Facebook advertising purposes, with one subtle exception.

In the longest link, an endless table that explains the different ways WhatsApp handles user data, the last line details the sharing of information “with the Meta Companies to operate, provide, improve, understand, customize, support, develop and market the Meta Companies products, features and services,” including:

Improving Meta Companies services and your experiences using them, such as personalizing features and content, helping you complete purchases and transactions, and showing relevant offers and ads across the Meta Company Products in accordance with their own specific terms and privacy policies (for example, for integrations like WhatsApp Shops), remembering that users have to opt-in to chat with businesses.

In the next column, WhatsApp explains what data is shared. Among them, “your account information”. This same item provides an exception: users who already had a WhatsApp account in 2016 and opted-out of sharing app data with Facebook and other Meta companies. This “opportunity” was given after another privacy policy update back then.

At the time, Meta (then called Facebook) broke a promise made at the time of WhatsApp purchase, that it would not cross data from the app with Facebook’s. The statement for the acceptance of the then new terms and privacy policy explicitly stated the intent of the change, as the image above shows. I only have it in Portuguese; the text next to the acceptance toggle reads:

Share my WhatsApp account data with Facebook to improve my experiences with ads and products on Facebook. Your conversations and phone number will not be shared with Facebook, regardless of this setting.

It follows, therefore, that Meta treats its users unequally, depending on where they live. In Europe, WhatsApp data is not used for advertising purposes by Facebook and Instagram. In the rest of the world, yes — unless you signaled otherwise in a narrow 30-day window in 2016.

3/3/2023

Created when Jack Dorsey was CEO of Twitter, Bluesky is a kind of social network reimagined as an open protocol, called AT Protocol.

An app for iOS was released this week, giving the public the first taste of what the developers — who at some point in the past emancipated themselves from Twitter — are up to.

For now, access to Bluesky is by limited invitation only. I got one and now tell you what this “blue sky” alternative to Twitter is like.

Bluesky’s app is a sort of Twitter reduced to the bare minimum needed for the basic social networking experience.

In some areas, such as the welcome screen, it is evident that it is a half-baked thing at this point, still lacking the polish that is expected from finished, ready for the general public apps.

After registration, which, I repeat, only works for those who have an invitation, what is revealed is a pretty forgettable social networking experience along the lines of Twitter:

- There are only three tabs: main feed, search, and notifications.

- Search is only for contacts, it doesn’t work for posts/content, but it does display some posts when accessed under the label “Recently, on Bluesky…”

- There is a floating button to new posts — which can be up to 256 characters long.

- It’s possible to upload images.

- The settings are null, only allowing you to log in and manage multiple profiles.

As for content, the first few hours of Bluesky presented me with lots of posts and “RTs” from Jay Graber, CEO of Bluesky, posts from Brazilians, and pictures of the blue sky.

A word about protocols

An important detail of Bluesky is the username. Mine, for example, became ghedin.bsky.social. The part after the first period is a domain.

Yes, you have seen this: it is a similar structure to Mastodon, which is based on another open/decentralized protocol, ActivityPub.

Like Mastodon, you will be able to install Bluesky on different servers and the user will have power over their data, to migrate to another server/instance leaving nothing behind.

Bluesky CEO Jay Graber, in announcing the “private beta” program, listed the features the team intends to highlight in the coming months:

- Domain names as usernames & account portability;

- Algorithmic choice & custom feeds;

- Composable moderation & reputation systems.

The goal of the Bluskey app is more to be a proof of concept than anything else.

In announcing Bluesky’s private beta, Graber said that:

We expect the app to serve as a reference client for developers to learn how to build on atproto, as well as a landing place for users to see how a decentralized social app can be pleasant to use, customizable, performant, and safe.

The mastodon in the room

All this would be revolutionary if we didn’t already have Mastodon. The promises of both ActivityPub and AT Protocol are very similar, and ActivityPub has the advantage of being a de facto standard and not being owned by a private company.

Bluesky’s app is rudimentary, but functional. Even though it works differently, it reminds a lot of Domus, the one based on Nostr, another open protocol.

It is too soon to say what Bluesky is about. The first impression is… ok? Congratulations, that’s a nice you have there, but why someone would choose it over Mastodon/ActivityPub, or even over Twitter, is the big question still unanswered.

Click here to download Bluesky for iOS. To get on the waiting list for an invite, just leave your email address on the official site.

23/2/2023

A recurring comment from people who post stuff on the internet and give Mastodon/fediverse a try is the (high) engagement and reach they get there.

Even with much smaller audiences than at Twitter, posts usually get more likes, “boosts” (RTs), and clicks. How can that be?

Wall Street Journal tech columnist Christopher Mims has a good hypothesis:

To me, the answer is pretty simple: Twitter tries to aggregate as much attention as possible around stuff that goes mega-viral.

There are only so many minutes in the day. So for stuff to “blow up big” necessitates that most of the rest of the posts from people we might actually want to hear from must go unseen.

The logic of the fediverse is to deliver the content that someone asked to receive, as in following other people, without an opaque filter (the “algorithm”) in between.

This logic pulverises the attention distribution — less viral content that gets on TV and even your grandma knows about it, more small, organic content spreading across the web in niches. More diversity, more inclusion, more chances for more people to be heard.

Discuss @ Hacker News.

15/2/2023

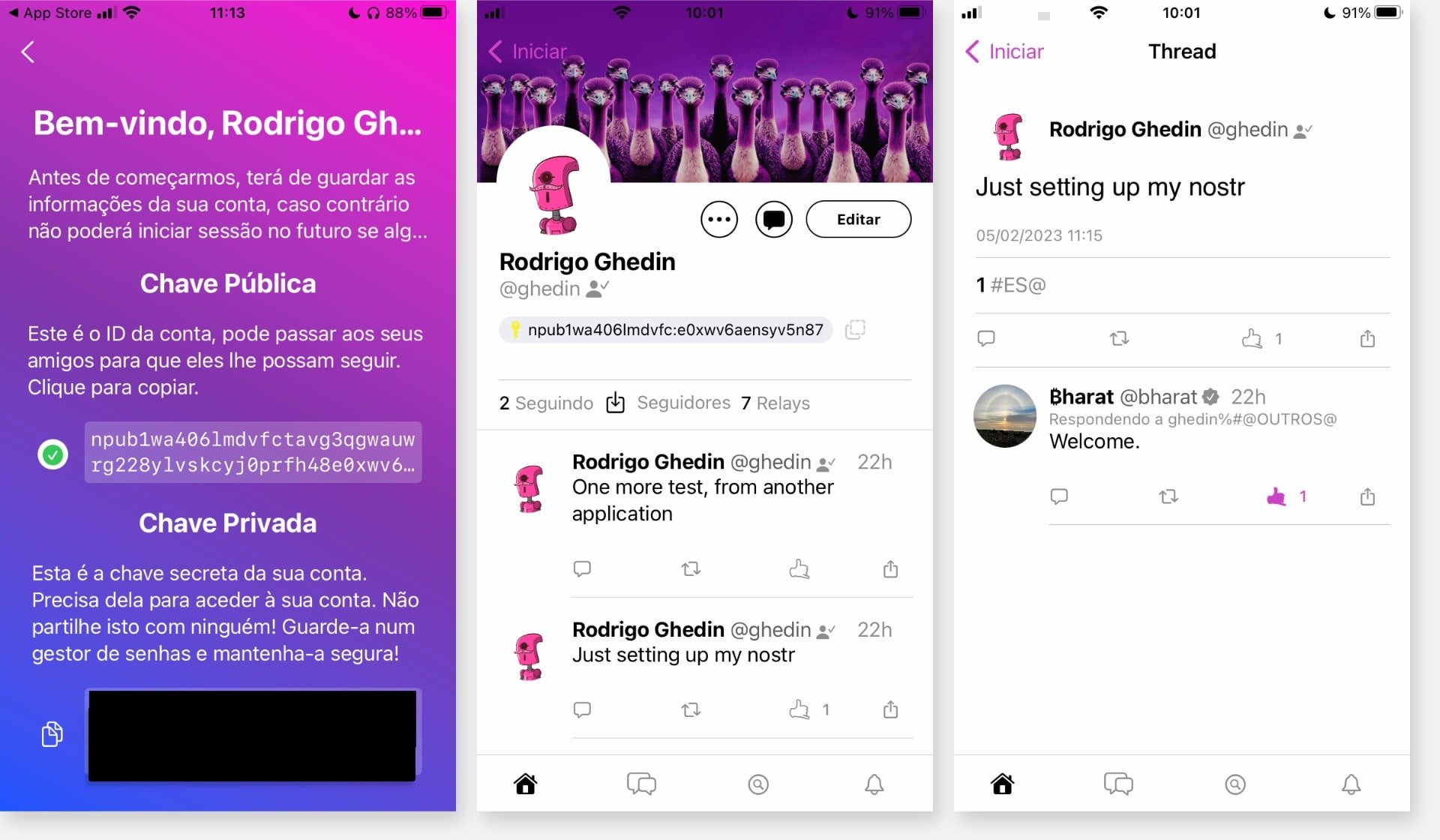

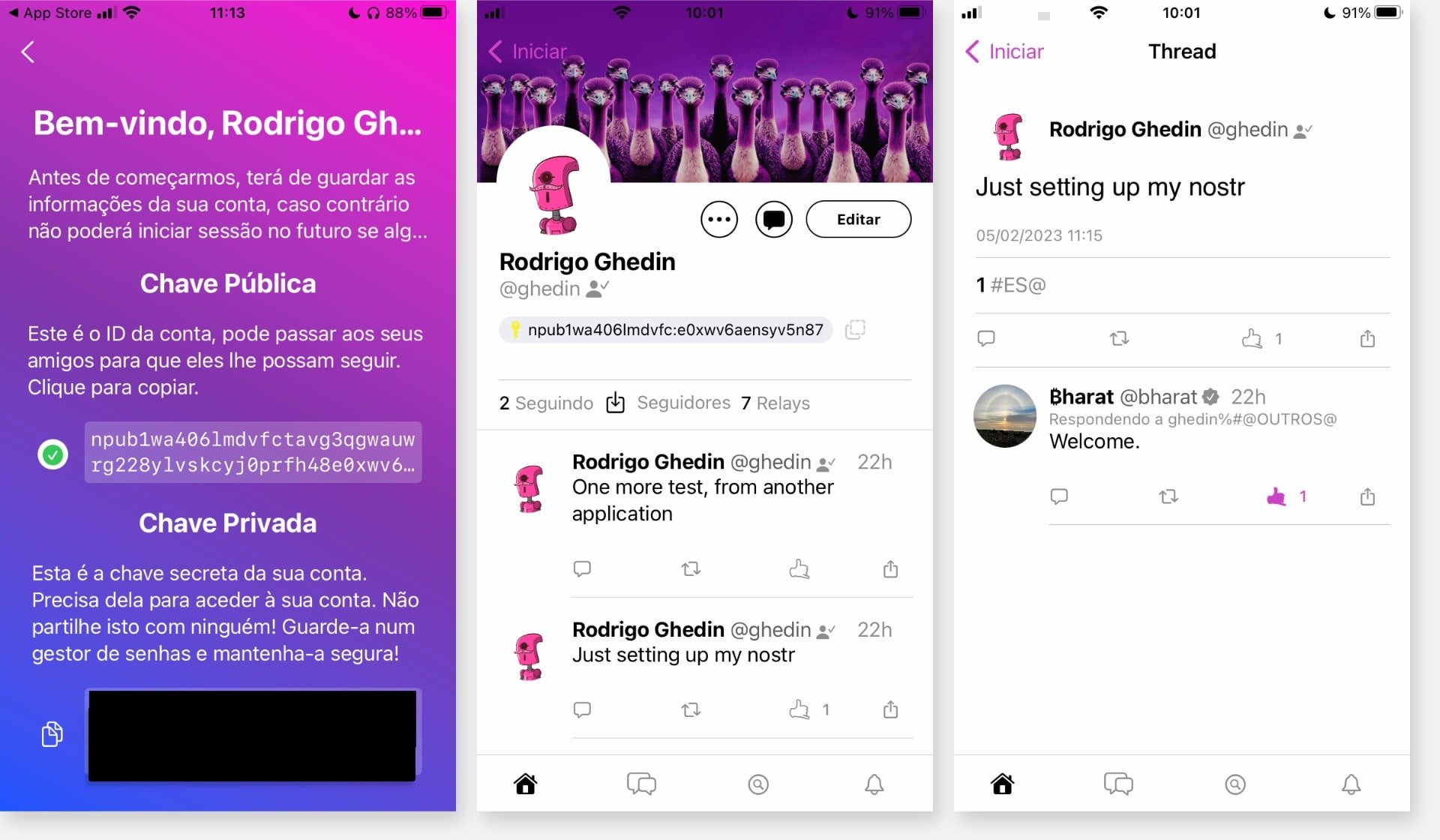

“A milestone for open protocols…” This is how Jack Dorsey, co-founder and former CEO of Twitter, announced the arrival of the Damus app, a Nostr protocol client, in the App Store/iOS.

Nostr has generated buzz in developer groups, bitcoin addicts, and a crowd very suspicious of their own shadows. The reason: Nostr brings an alternative to commercial social media that, in the words of its creators, would be “truly censorship-resistant.”

But Nostr isn’t a new social network. Nostr is a protocol, something more like the web (HTTP/S) and email (IMAP/SMTP) than Twitter or Instagram.

In practice, Nostr is a foundation on which developers build applications. Its main differentiator is the authentication system, based on cryptographic keys. Experts praise the simplicity of the protocol, which in theory facilitates the development of apps.

(Hold my hand and come with me because now things get a little weirder).

To create a profile/an identity in Nostr, you need to enter or create a pair of keys:

- One of these is private and functions as the “password” for logging into the apps. It is vital to guard the password well, because if you lose it, there is no way to regain access to the account, and if someone finds out, they can impersonate you and there is no way to reverse it.

- The other is the public one, and it is the equivalent of your @username on Nostr. When you share your profile you do not indicate an @username, but your public key.

Both keys are jumbles of letters and numbers. It is like that code from Nintendo’s virtual network, only (much) worse.

Want to follow me on Nostr? Search for npub1wa406lmdvfctavg3qgwauwrg228ylvskcyj0prfh48e0xwv6aensyv5n87.

Yeah, I know.

With your private key, you can log into any app and feel at home with your content and connections.

Another difference of Nostr to conventional social networks is that the structure is based on “relays,” as if they were “nodes” in a peer-to-peer network.

“[Relays] allow Nostr clients to send them messages, and they may (or may not) store those messages and broadcast those messages to all other connected clients,” says Nostr.how, a good interactive Nostr tutorial.

In Nostr.watch you can see the active relays in real time and details such as latency and country of origin. At the time of writing this there are 282 active relays worldwide.

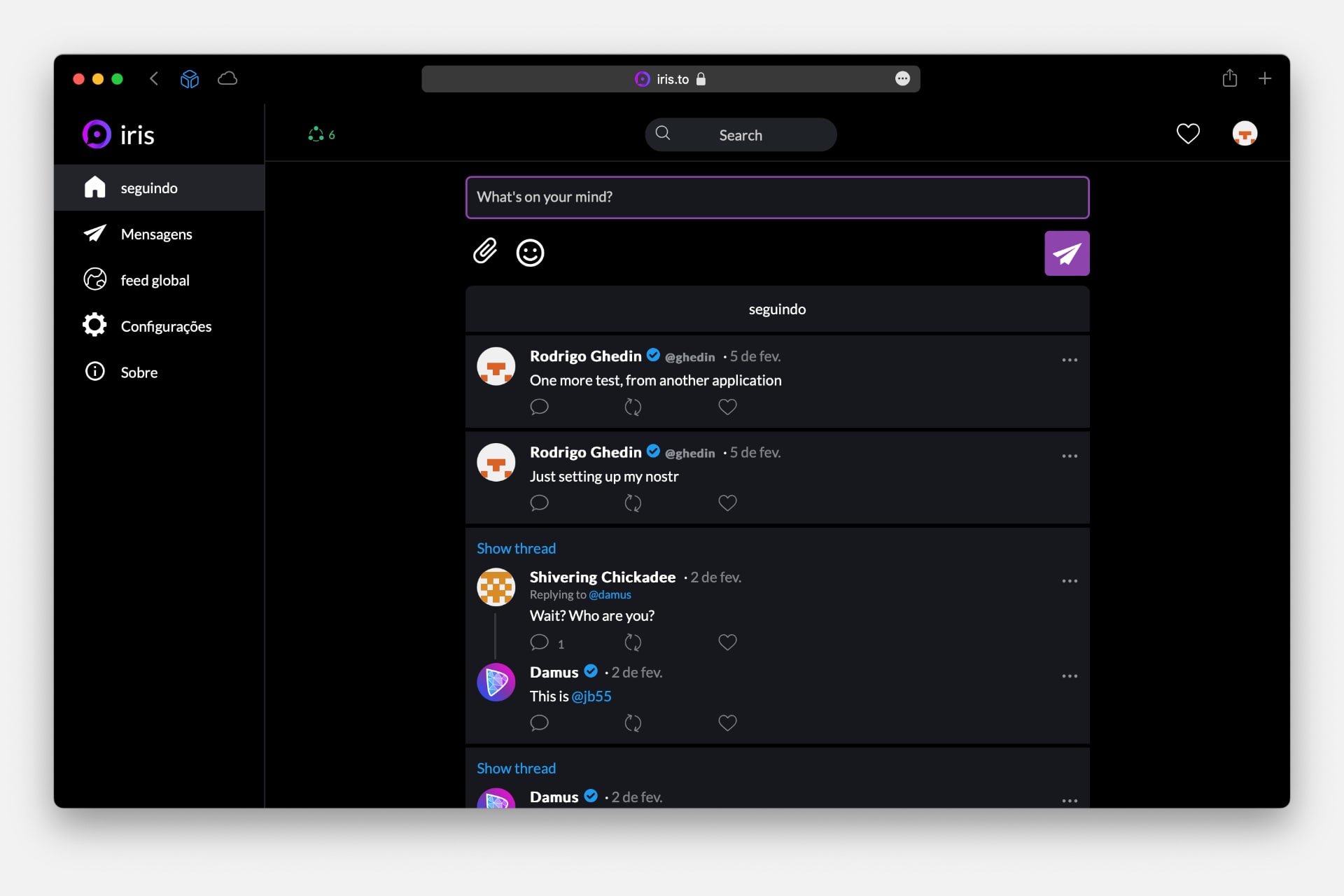

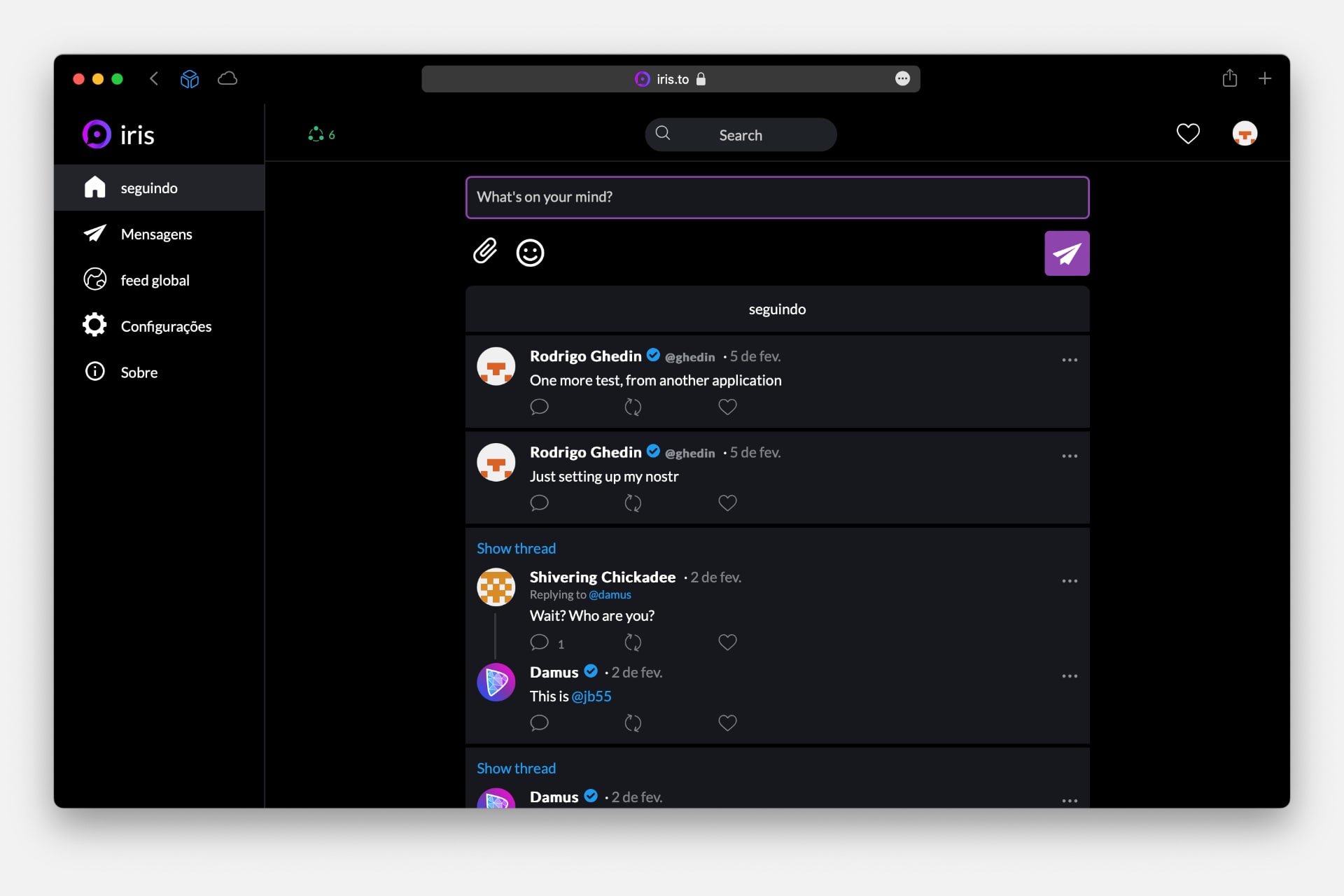

I created my profile in the Damus app and then logged into Iris, a web application (see my profile). And… it works!

When I logged into Iris, I could see the system communicating with the relays — my posts didn’t appear immediately. In one corner of the screen of both apps, Iris and Damus, you can see the number of connected relays.

Both apps are very reminiscent of Twitter, with a timeline, posting box, and direct messages, which here are encrypted end-to-end.

It doesn’t have to be this way, however. Being a protocol, Nostr allows the creation of very different apps on top of it.

On this site and in this list are different apps based on the protocol, such as Jester, a chess game, and Alby, a bitcoin wallet.

Nostr is a kind of “end-of-the-world protocol,” designed for extreme situations where absolute mistrust reigns between those involved — users, relays, and app providers.

If in Twitter you have to trust Twitter, and in Mastodon, the administrator of your instance/server, none of this is necessary in Nostr. The protocol is truly decentralized and its using, although connected, is independent of other parties.

Even the identity verification system, called NIP-05, is independent of external validations. It is done based on DNS records.

To the creators and promoters of Nostr, the great appeal is an almost full resistance to “censorship”. We know where this conversation is taking us, and… it’s not a good place.

Despite the support of influential people in the industry, like Jack Dorsey, Nostr sounds at the moment like something too complex for mass adoption — far more complex than Mastodon, for example — and without much appeal to normal people who just want to have a laugh on Twitter and look at pictures of celebrities, food, and friends on Instagram.

Much of the complexity lies in the pair of keys thing. Which is not new; This is a recurrent thing among those who work with servers and development. Although they have many advantages, it’s risky and difficult to understand for those who are not in the business.

It is no wonder that commercial apps often use abstractions, such as login and password, to make it easier for more people to use them. If people can’t keep passwords, imagine a kilometer-long pair of cryptographic keys?

My bet? It might work, but it will be niche, like other creative little protocols that pop up from time to time, such as Gemini, an alternative to the web that looks like the web of the 1990s.

6/2/2023

Being in the fediverse today is a familiar and weird experience at the same time.

On the surface, it’s all very similar to Twitter and other social networks. Yes, the system for following/finding someone is kind of clunky and there are unique features and conventions there, like the “content warning” and the emphasis on image descriptions. These details are quickly learned, though.

Things can get (and do) more complex.

Time and again, differences emerge between instances (themselves, a difficult concept to explain) and groups of users, such as long-time users and newcomers coming from Twitter. And although the power dynamics are good, better than those of commercial alternatives — decentralized, with distributed power — this doesn’t mean that the architecture is ready or does not need adjustments, improvements.

One obvious, long due is to give more transparency to instance blocking.

Instances, or communities, have the power to block each other. And they, or most of them, make use of this power. Which is great: the social network Gab, that one for white supremacists? It’s Mastodon under the hood, which doesn’t mean much because almost all other instances preventively block it.

So far, so good. The problem is that reporting these blocks is right now at the discretion of the instance administrator. There is no way for anyone to know about the blocks done on their behalf, nor about the blocks of other instances to theirs.

And, at the very least, Mastodon should notify members involved in cross-instance lockouts. This helps users to be aware of the administration’s actions and make informed decisions to stay or migrate instances. (Migration is a smooth process and you don’t lose followers by doing it.)

A practical example. In late 2022, Brazilian instances blocked another, called Ursal. Some administrators, such as Donte’s and Bantu’s (both in Portuguese), published posts justifying the decision. We cannot tolerate certain abuses, and we are all grown ups with limited time. The omission of the administration of one instance requires the administration of other instances to moderate other members about basic civility practices. This isn’t sustainable, and it’s also pretty lame.

Nothing wrong with blocking instances with bad admins. The problem is that I only found out about the blocking when it was already in effect. The account for my Portuguese-written blog was at Donte and, as much as I now agree with the admin’s decision and praise his hard work, this decision affected the relationship I had with people at Ursal, who, like me, were not aware of the troubles of Ursal admin. We were taken by surprise with the breakdown.

When I migrated my blog’s account to its own instance, re-establishing contact with Ursal, someone from Donte questioned me:

Is Ursal blocked on Donte? I had followers and followed people from there. Does this mean that our communication is no longer possible?

Yes, it means exactly that.

A global and automatic notification system would avoid this kind of unpleasant surprise. There’s nothing like that on Mastodon roadmap, although a (great) feature suggestion was made on GitHub two years ago.

Nevertheless, despite everything, the decentralized fediverse/Mastodon model has been better than Twitter’s centralized one. If on Elon Musk’s network the abuses run wild and are only stopped at the threshold of absurdity (if ever), here on the other side, with ordinary people in charge, dialoguing, playing politics, making mistakes and learning, and trying to get it right, the environment in general is more harmonious, more pleasant.

Rushes like this involving Ursal are inevitable. We are, after all, people trying to find a common denominator to live together in an environment where communication is limited, one to many, unnatural. With patience and good will, however, we will go far.